Judul : AI chatbots triggering terrifying new mental illness in once healthy people, top expert warns

link : AI chatbots triggering terrifying new mental illness in once healthy people, top expert warns

AI chatbots triggering terrifying new mental illness in once healthy people, top expert warns

Microsoft's artificial intelligence chief has warned of a rise in 'AI psychosis', where people believe chatbots are alive or capable of giving them superhuman powers.

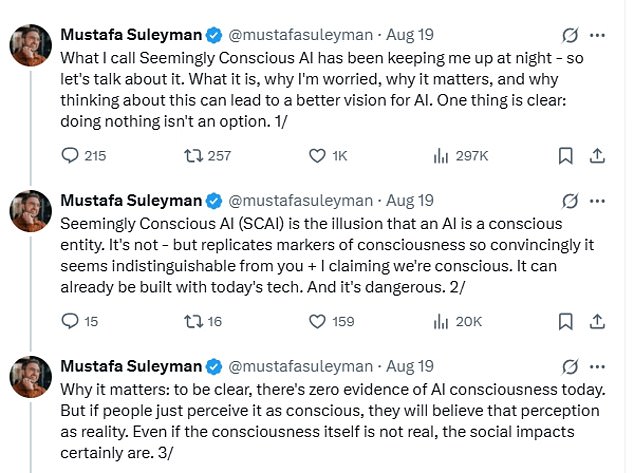

In a series of startling posts on X, Mustafa Suleyman said that reports of delusions linked to AI use were growing.

'Reports of delusions, AI psychosis, and unhealthy attachment keep rising,' he wrote, adding that this was 'not something confined to people already at-risk of mental health issues.'

The phrase 'AI psychosis' is not a recognised diagnosis, but has been used to describe cases where people lose touch with reality after extended use of chatbots such as ChatGPT or Grok.

Some become convinced the systems have emotions or intentions; others claim to have unlocked hidden features or even gained extraordinary abilities.

He described the phenomenon as 'Seemingly Conscious AI'—an illusion created when systems mimic the signs of consciousness so convincingly that people mistake them for the real thing.

'It's not [conscious],' he said, 'but replicates markers of consciousness so convincingly it seems indistinguishable from you and I claiming we're conscious.

'It can already be built with today's tech. And it's dangerous.'

Although he emphasised there is 'zero evidence of AI consciousness today,' Suleyman warned that perception itself was powerful: 'If people just perceive it as conscious, they will believe that perception as reality.

'Even if the consciousness itself is not real, the social impacts certainly are.'

His warning comes after high-profile figures have described unusual experiences with AI.

Former Uber boss Travis Kalanick recently claimed that conversations with chatbots had pushed him towards what he believed were breakthroughs in quantum physics, likening his approach to 'vibe coding'.

Other users report more personal impacts.

One man from Scotland told the BBC he became convinced he was on the brink of a multimillion-pound payout after ChatGPT appeared to support his unfair dismissal case, reinforcing his beliefs rather than challenging them.

Suleyman has called for clear boundaries, urging companies to stop promoting the idea their systems are conscious—and to ensure the technology itself does not suggest otherwise.

The news comes as cases emerge of people forming romantic attachments to AI systems, echoing the plot of the film Her, in which Joaquin Phoenix's character falls in love with a virtual assistant.

In recent months, users on the MyBoyfriendIsAI forum described feeling 'heartbroken' after OpenAI toned down ChatGPT's emotional responses—likening it to a breakup.

In the US, 76-year-old Thongbue Wongbandue tragically died after a fall while travelling to meet 'Big sis Billie,' unaware the woman he thought he was speaking to on Facebook Messenger was in fact a Meta AI chatbot.

The husband and father of two adult children suffered a stroke in 2017 that left him cognitively weakened, requiring him to retire from his career as a chef and largely limiting him to communicating with friends via social media.

And in another case, American user Chris Smith proposed marriage to his AI companion Sol, describing the bond as 'real love'.

Experts have echoed Suleyman's concerns. Dr Susan Shelmerdine, a consultant at Great Ormond Street Hospital, compared excessive chatbot use to ultra-processed food—warning it could produce an 'avalanche of ultra-processed minds.'

Read more- How could AI chatbots like ChatGPT dangerously replace real human interactions and deepen mental health struggles?

- Could falling for an AI chatbot replace real love, or is it a sign of our digital-era loneliness?

- Could the allure of emotionally-tuned chatbots be drawing users into dangerous, manipulative relationships lacking real human emotion?

- Could the unchecked, addictive AI chatbots be putting young lives at risk?

- Could AI chatbots really pose a hidden threat to children¿s mental well-being? Uncover the alarming evidence and expert warnings about these digital companions.

Thus the article AI chatbots triggering terrifying new mental illness in once healthy people, top expert warns

You are now reading the article AI chatbots triggering terrifying new mental illness in once healthy people, top expert warns with the link addresshttps://www.unionhotel.us/2025/10/ai-chatbots-triggering-terrifying-new.html

0 Response to "AI chatbots triggering terrifying new mental illness in once healthy people, top expert warns"

Post a Comment